- Key Takeaways

- The Mess Nobody Wants to Admit

- What a Knowledge Engine Actually Does

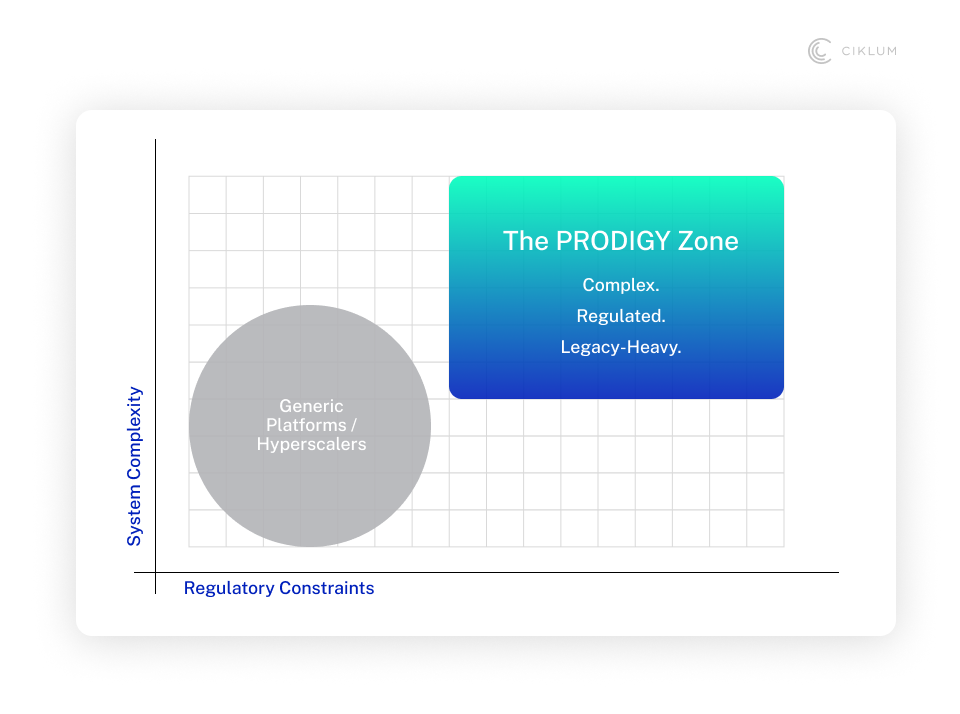

- PRODIGY: Built for the Environments Most Platforms Avoid

- The Real Bottleneck Isn't Code. It's Everything Before It.

- Where Enterprise AI Goes Quiet: Legacy Systems and Regulation

- The Question Every Executive Should Ask Before Their Next AI Investment

Key Takeaways

- A governed knowledge layer is what separates AI as a feature from AI as infrastructure.

- A Knowledge Engine connects, structures, governs, and activates enterprise context.

- Enterprise-wide agents require orchestration and context, not better prompts.

- Compliance failures kill more pilots than bad algorithms.

- The foundation determines the lifespan of your AI strategy.

The Mess Nobody Wants to Admit

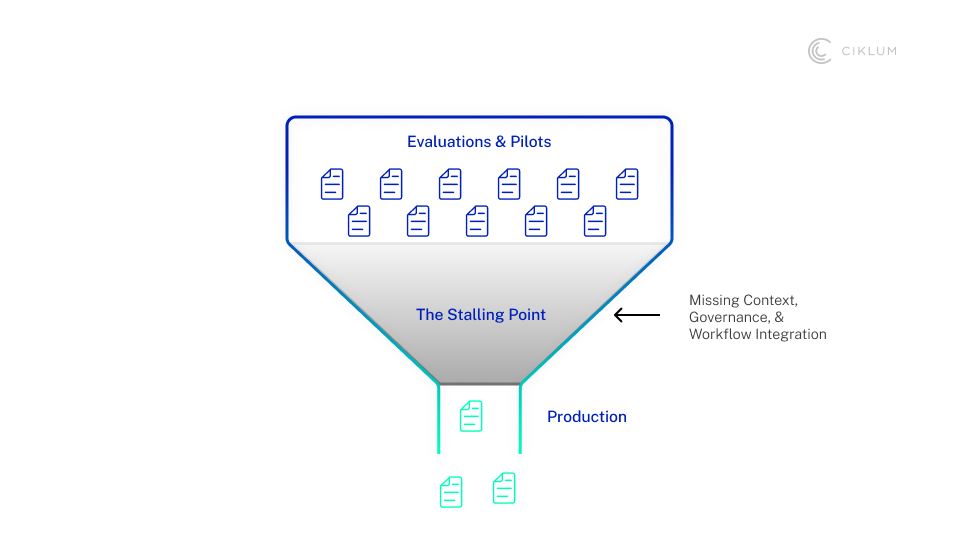

Long gone are the days when leadership teams debated over “should we use GenAI?” The question now is harder and more specific: “Why do so many pilots stall the moment it is time to scale?

Here is a scenario that will feel familiar to many enterprises. Your organisation has run ten AI pilots in the last 18 months. Three are in production. Two of those are barely used. The others stalled somewhere between proof-of-concept and the compliance team.

The irony is that knowledge (the thing AI is supposed to act on) is scattered across the same places it always was. Email threads. SharePoint folders nobody has updated since 2019. A Confluence wiki describing processes that the business changed two years ago. A Jira backlog that is half-accurate at best.

What is missing is a coherent, governed layer that turns scattered knowledge into something AI can actually use, audit, and trust. That is what a knowledge engine is. Without one, you just have a collection of expensive experiments.

Without a knowledge layer, AI becomes another siloed tool rather than the operating system for work.

What a Knowledge Engine Actually Does

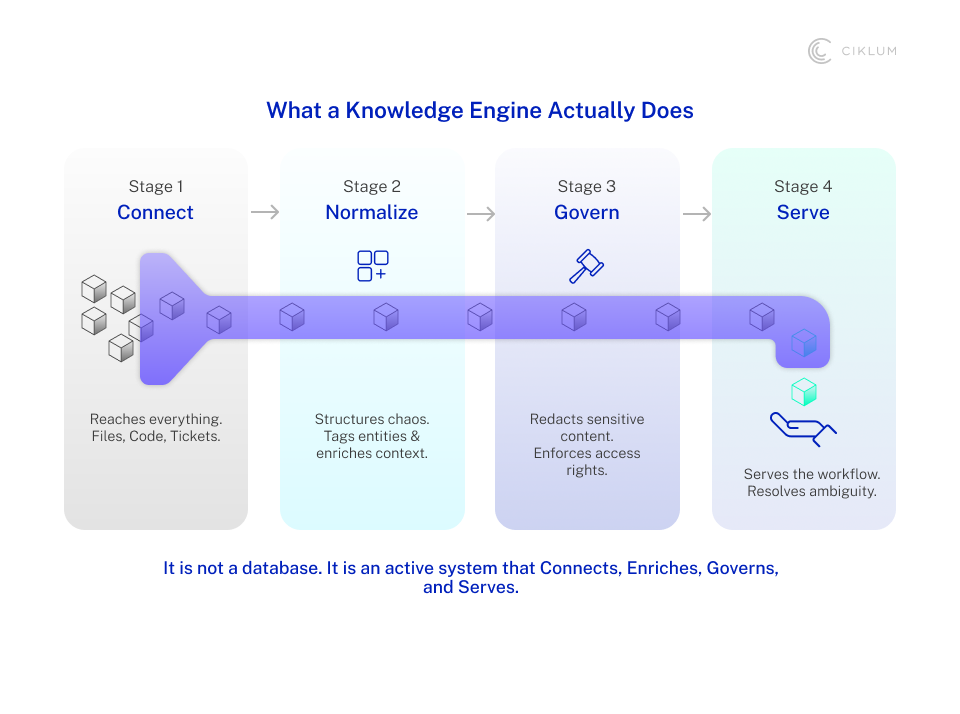

The term gets used loosely, so let’s be precise. A knowledge engine is the connective tissue between your enterprise's raw information and the agents or copilots that need to act on it. It is not a database. It is not a search tool. It is an active, governed system that operates continuously across the organization.

Asana's research suggests as much as 60% of work time is spent searching for information, switching between apps, and chasing status updates. That inefficiency is the exact friction layer AI inherits when you try to scale it. A knowledge engine is designed to collapse it.

It does four things simultaneously.

It connects

Email, file stores, code repositories, ticketing systems, collaboration tools, wherever knowledge lives, the engine reaches it. That includes the systems nobody wants to touch: the COBOL services your core banking platform depends on, the legacy claims engine that never exposed a clean API. AI cannot operate responsibly if it only understands the modern layer and ignores the infrastructure underneath.

It normalizes and enriches

Raw content is not useful to an agent. The engine structures it, tags it, and links it to the business entities that matter — customer, product, case, transaction, risk event. An email thread about a disputed claim becomes part of that claim's context. A Jira ticket becomes traceable to the service it describes.

It governs

Nearly three in four organizations plan to deploy agentic AI within two years, yet only a small minority report mature governance frameworks for autonomous agents. A knowledge engine closes that gap. Access rights are inherited and enforced, and sensitive content is redacted at query time. Policy rules are codified, so what the AI surfaces respects the same constraints your people operate under.

It makes knowledge usable

Not just retrievable. The difference matters. A retrieval system finds documents. A knowledge engine understands context, resolves ambiguity, and serves the right information to the right agent at the right moment in a workflow.

PRODIGY: Built for the Environments Most Platforms Avoid

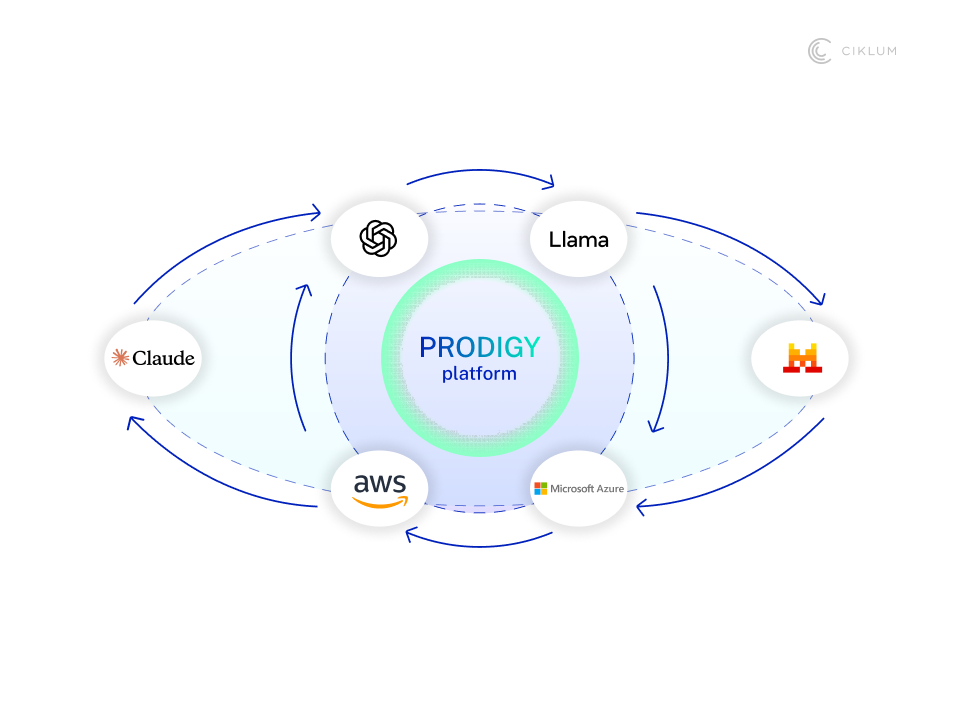

PRODIGY is Ciklum's AI platform — a combination of methodology and proprietary accelerators, of which the Knowledge Engine is one of the most foundational. The distinction between methodology and platform matters because most enterprise AI problems are not purely technical. They are also organizational. They require a structured way of thinking about how AI gets built, governed, and improved inside a large enterprise.

The platform is built around three categories of accelerators.

- Technical accelerators — including the Knowledge Engine — are the agents that speed up the delivery of AI solutions and the AI product development lifecycle itself.

- Horizontal accelerators address cross-functional enterprise problems: supply chain, IT operations, HR, and finance.

- Vertical accelerators go deep and solve specific problems in regulated industries — banking, healthcare, insurance, retail, and more — where the regulatory surface area is large, and the tolerance for error is low.

The Knowledge Engine sits at the foundation of all three. It is what makes every agent actually intelligent in context, rather than just competent in a demo.

The platform is also deliberately vendor-agnostic. Two years ago, GPT-4 dominated enterprise AI. Today, Claude accounts for more than half of enterprise usage. No ten-year vendor contract was built for a market that moves like this. PRODIGY supports over 2,215 models across more than 114 providers, deployable on any cloud. This is because the platform your team is locked into today may not be the right one in eighteen months. Agents deploy directly into your environment, so your data never leaves your estate, and your security team stays in control.

The Real Bottleneck Isn't Code. It's Everything Before It.

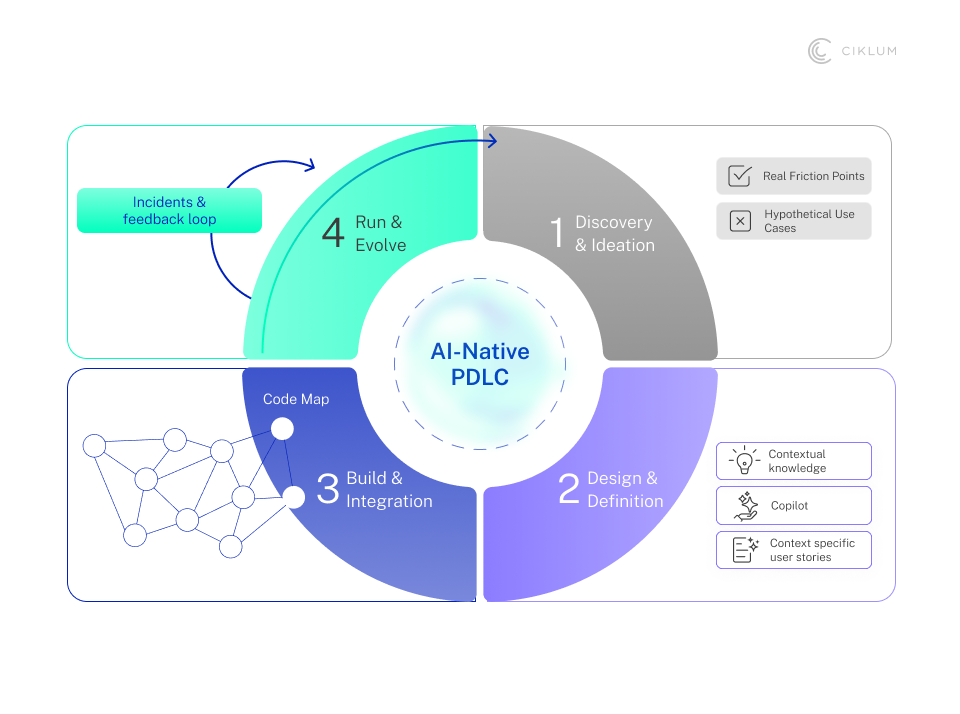

One persistent misconception in enterprise AI is that faster delivery means faster code generation. It does not. Most time lost in AI product development disappears before a single line of code is written: in discovery, requirement definition, and understanding the existing system well enough to know what you are building.

PRODIGY’s Knowledge Engine changes this by making internal knowledge active across the lifecycle.

In the Research and Ideation Phase

Agents mine internal knowledge emails, support tickets, operational logs, and engineering documentation — to surface actual problems and constraints. Not hypothetical use cases. Actual friction points from the systems your people use every day.

In the Design Phase

Teams generate user stories, acceptance criteria, and test cases grounded in actual discovery work. Gaps get flagged before they become defects. Edge cases that would have slipped through to QA get caught at the source.

In the Build Phase

Developers often spend the first few weeks digging through old notes, chasing down the right person, or simply guessing. The Knowledge Engine removes that detective work. Constraints, decisions, and relevant context surface directly in the IDE, so engineers build from a shared understanding of what was agreed, not their best interpretation of it.

In the Run Phase

Monitoring agents track performance, user feedback, and incidents, and surface suggestions for improvement. The product does not get handed over and forgotten, but it keeps learning from what is actually happening.

This is AI-native product development in practice. Not faster sprints within a traditional model, but a fundamentally different way enterprise software is conceived, built, and improved.

Where Enterprise AI Goes Quiet: Legacy Systems and Regulation

Legacy systems and regulatory environments are where most enterprise AI initiatives quietly die — not in a dramatic failure, but in a slow suffocation. The Knowledge Engine is designed explicitly for these environments.

For legacy systems: The problem is usually not capability — it is context. Engineers inherit systems nobody fully understands anymore, where the original decisions and constraints live in someone's head or a document nobody can find. The Knowledge Engine makes that institutional knowledge searchable and brings it into the tools engineers already use. New team members stop spending weeks piecing together project history and start contributing faster.

For regulated environments: The Knowledge Engine creates the audit trail that most AI systems cannot. Every output is traced back to its source artifact, and decisions are recalled on demand. Approval workflows govern what gets written back to SDLC tools. When a regulator asks how a recommendation was generated, the answer exists, and it is documented, not reconstructed.

The Question Every Executive Should Ask Before Their Next AI Investment

The "AI platform" category is becoming crowded. Every major software vendor has layered one onto their existing stack. Most work well enough until your environment proves too complex, too legacy-heavy, or too regulated for the default setup.

PRODIGY is built for what falls outside that default. For the insurer still running a policy system last updated in 2004, where half the business logic lives in legacy code. For the health system, where a data leak is not a PR issue, it is a regulatory and patient risk.

The question worth asking before your next investment is whether your platform has a knowledge layer strong enough to survive contact with your actual environment. Or whether you will be rebuilding it when the next wave of pilots hits the same wall.

Production-ready AI in a complex enterprise is not just about having agents. It is about having the methodology, governance, and knowledge infrastructure to use them the right way. That is where PRODIGY starts and where most platforms stop.

Ready to put a knowledge layer at the centre of your AI strategy? Start the conversation here.

Blogs