Key Takeaways

- Data issues drive most AI failures: fragmentation, quality, and governance gaps undermine models and agents.

- Legacy architectures (batch, siloed, rigid schemas) can't support real-time agents or unstructured data at scale.

- Modern platforms (data fabrics, data meshes, lakehouses) offer unified access, real-time processing, semantic layers, and AI-ready governance.

- Impact: faster ROI, scale across many use cases, and compliance confidence via automated audits.

- Data modernization is strategic: it enables AI and improves decision-making across the organization.

AI automation offers the promise of transformative efficiency, yet most efforts stumble in the absence of a robust data foundation. Data challenges are a major cause of stalled AI projects.

Most AI initiatives stall before production because the data isn't ready, turning promising initiatives into experiments that never reach full production. The real enablers behind reliable, scalable, and compliant AI are modern enterprise data platforms, often overlooked, but absolutely essential.

The Data Crisis in AI Automation

AI systems (agents, workflows, and models) depend on data to perceive, reason, and act. Yet enterprise data is often fragmented across silos: legacy ERPs, cloud applications, spreadsheets, and unstructured files like emails and PDFs. This fragmentation leads to:

Poor model performance. Garbage in, garbage out. Without clean, complete, and contextual data, AI systems produce hallucinations, biases, and inaccurate predictions that erode trust and create risk.

Slow deployment. Data teams often find themselves dedicating the vast majority of their effort to preparing and integrating data, rather than building automation. Each new use case demands significant manual work to connect disparate systems, which leads to lengthy delays before results are realized.

Governance gaps. Without lineage tracking or access controls, organizations can't prove compliance or trust AI outputs. Regulatory risk increases while auditability decreases.

Why Traditional Data Architectures Fail AI at Scale

Legacy setups like periodic batch reporting and siloed warehouses were never designed for AI's demands: real-time access, massive volumes, and multimodal data.

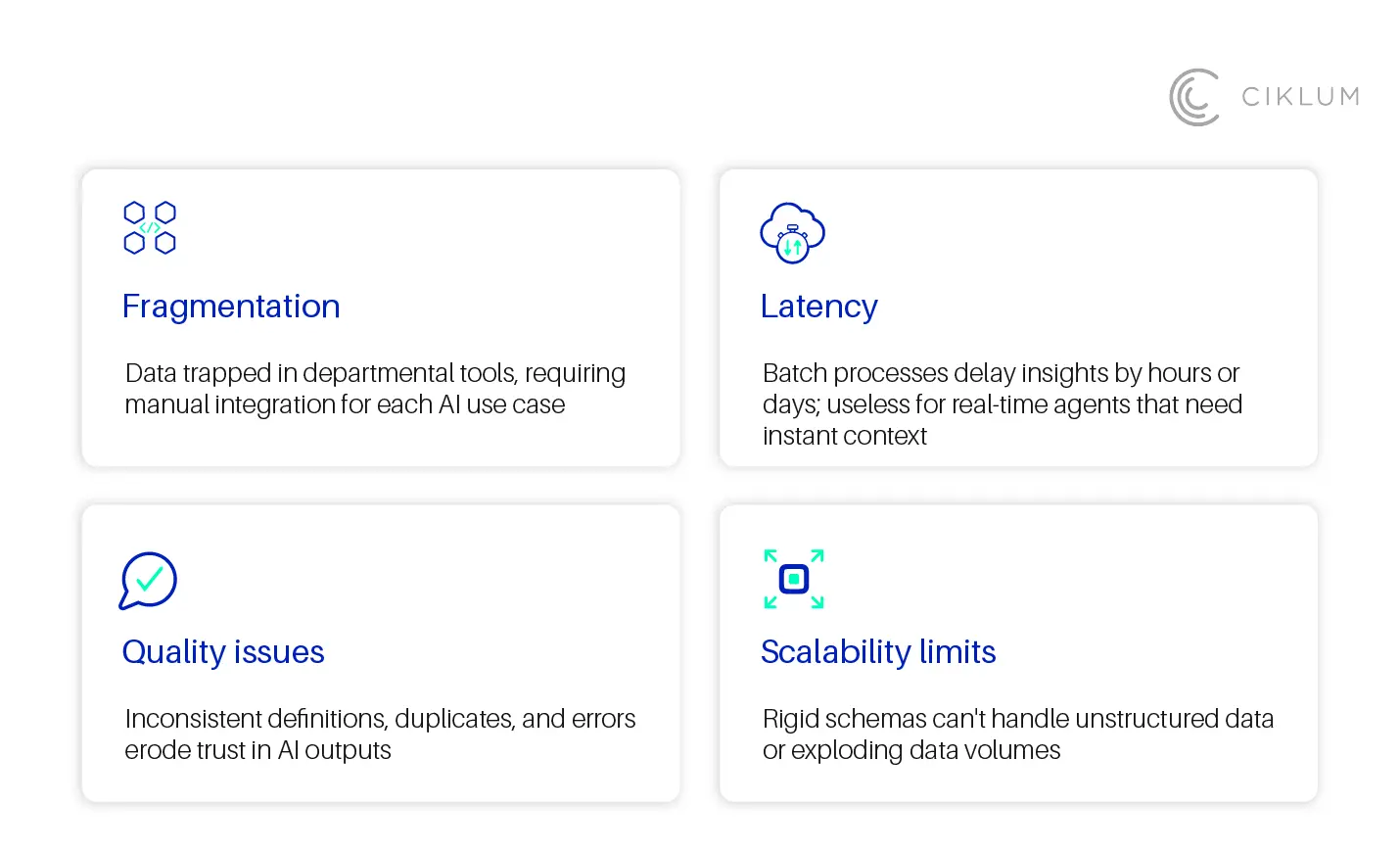

Common pitfalls include:

- Fragmentation: Data trapped in departmental tools, requiring manual integration for each AI use case

- Latency: Batch processes delay insights by hours or days; useless for real-time agents that need instant context

- Quality issues: Inconsistent definitions, duplicates, and errors erode trust in AI outputs

- Scalability limits: Rigid schemas can't handle unstructured data or exploding data volumes

Without modernization, AI automation remains confined to toy projects, unable to touch core business processes where the real value lies.

What Modern Enterprise Data Platforms Provide

Modern data platforms (often implemented as data fabrics, data meshes, or lakehouses) unify and govern data specifically for AI workloads.

Key features include:

Unified access. A single pane for structured, unstructured, and real-time data from any source. Agents and models can access what they need without custom integration for each use case.

Real-time processing. Streaming pipelines provide instant context for agents that need to act in the moment, e.g., fraud detection, customer support, operational monitoring.

Semantic layer. Business-friendly definitions and relationships across datasets ensure that AI systems understand context, not just raw values.

Built-in governance. Lineage tracking, quality checks, access controls, and compliance automation are embedded, not bolted on. Every AI decision can be traced and audited.

AI-ready optimization. Vector stores for retrieval-augmented generation (RAG), scalable compute for model training, and infrastructure designed for AI workloads from the ground up. In sectors like BFSI, building an AI-ready data infrastructure is now a prerequisite for analytics and automation at scale.

These platforms turn data chaos into a strategic asset, enabling AI to deliver accurate, auditable results at scale.

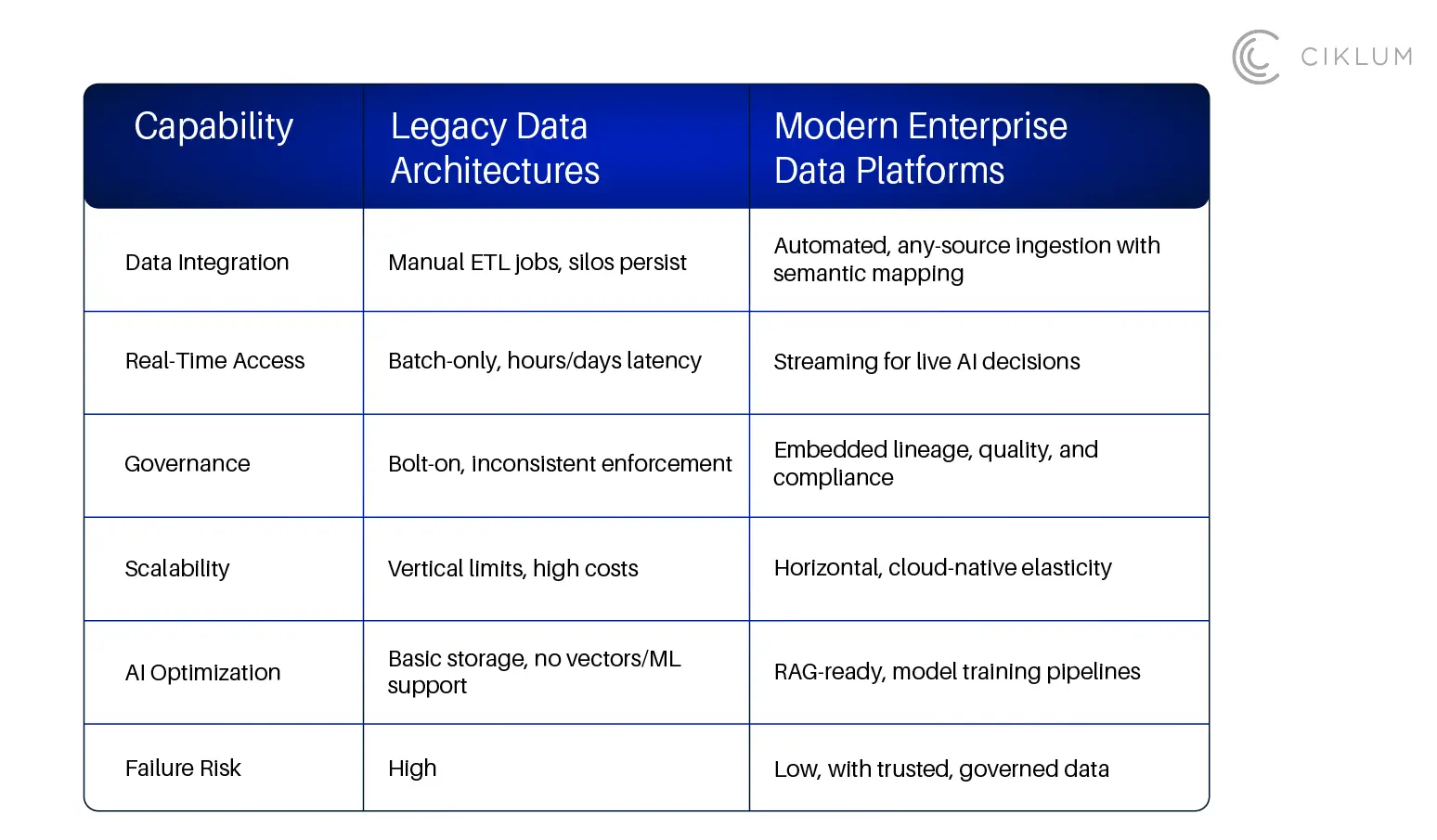

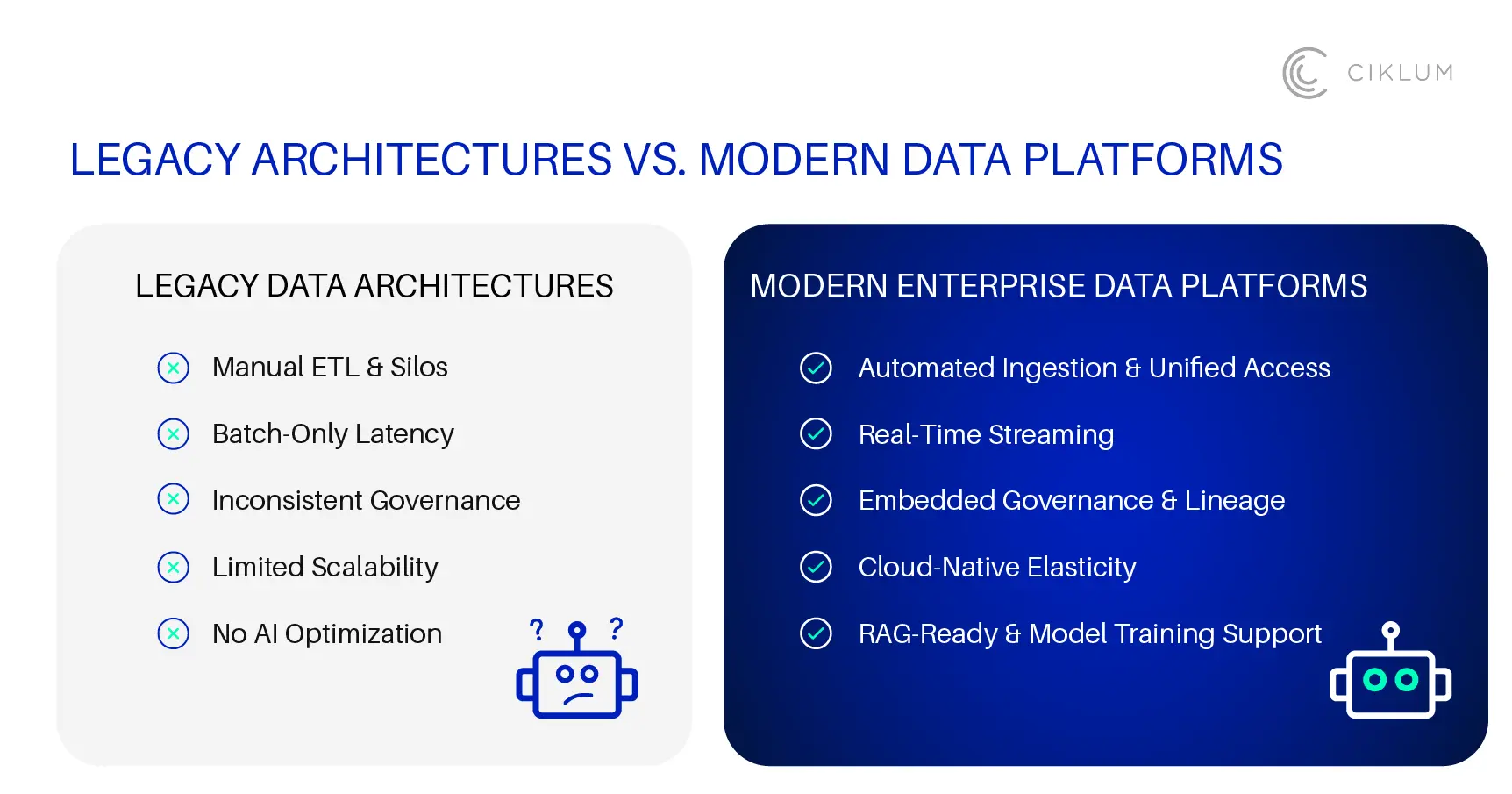

Legacy vs Modern

Modern Data Platforms vs. Legacy Architectures: A Shift in Approach

Real-World Impact

Organizations with modern data platforms experience clear, tangible benefits:

- Faster ROI: AI agents handle claims significantly more quickly and with high accuracy when provided with unified, high-quality data

- Scale without chaos: A single platform seamlessly supports a multitude of use cases across departments such as finance, operations, and support

- Compliance confidence: Automated audits demonstrate that AI-driven decisions consistently adhere to established policies

Consider a bank's fraud detection agent. With a modern data platform, it accesses unified transaction history, customer behavior patterns, and external risk signals in real time, cutting false positives by 40%. Without this foundation, the same agent would hallucinate on incomplete data views, creating both risk and customer friction. Real-world outcomes back this up: a FinTech client achieved a 74% reduction in processing time after consolidating billing applications and implementing automated ETL and reporting; a global consumer goods leader replaced 22+ disconnected data sources with a centralized Snowflake-based platform, gaining real-time visibility and cutting manual reporting.

Conclusion

AI automation isn’t held back by technology; it’s held back by unready data. Silos, batch delays, and missing governance turn smart agent and workflow ideas into demos that never make it into the real business. Fixing that doesn’t mean buying more models, it means getting the data layer right first. Modern platforms that give you unified access, real-time pipelines, and governance built in are what let AI actually deliver: accurate, traceable, and scalable. The teams that get this right treat data as the foundation. The ones that don’t keep restarting the same AI projects.

Blogs