Development teams all over the world are constantly on the lookout for ways to make their processes more reliable, effective, innovative and cost-efficient. And in the current climate, it’s no surprise that machine learning and artificial intelligence is one of the first places they’re turning to.

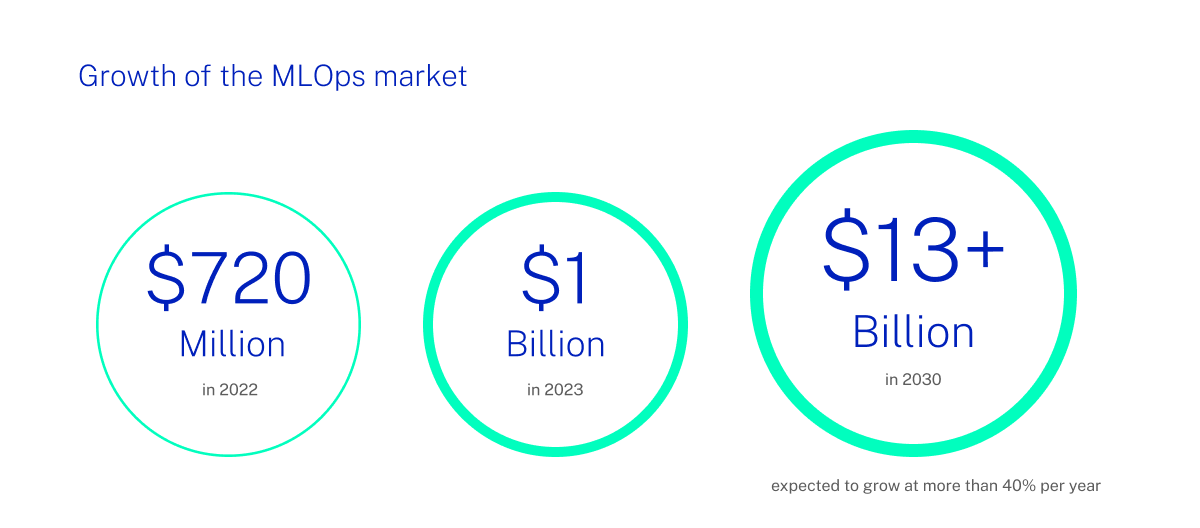

In particular, MLOps is allowing developers to harness the potential of machine learning through the development lifecycle, from initial preparation through deployment and into continuous improvement. This is fuelling major advances in the global MLOps market, which is expected to grow at more than 40% per year through the rest of the decade, and reach more than $13 billion by 2030.

MLOps is proving to be the key for many organizations who have struggled to integrate and evolve machine learning into their businesses. In this blog, we’ll explore how it works in practice, and how the benefits of MLOps can spread far beyond development processes themselves.

What is MLOps?

MLOps is short for ‘Machine Learning Operations’ or ‘Machine Learning Ops’, and refers to the utilization of machine learning models by DevOps teams. The idea is that MLOps can make development processes more reliable and efficient, by introducing processes for building, deploying and enhancing ML models.

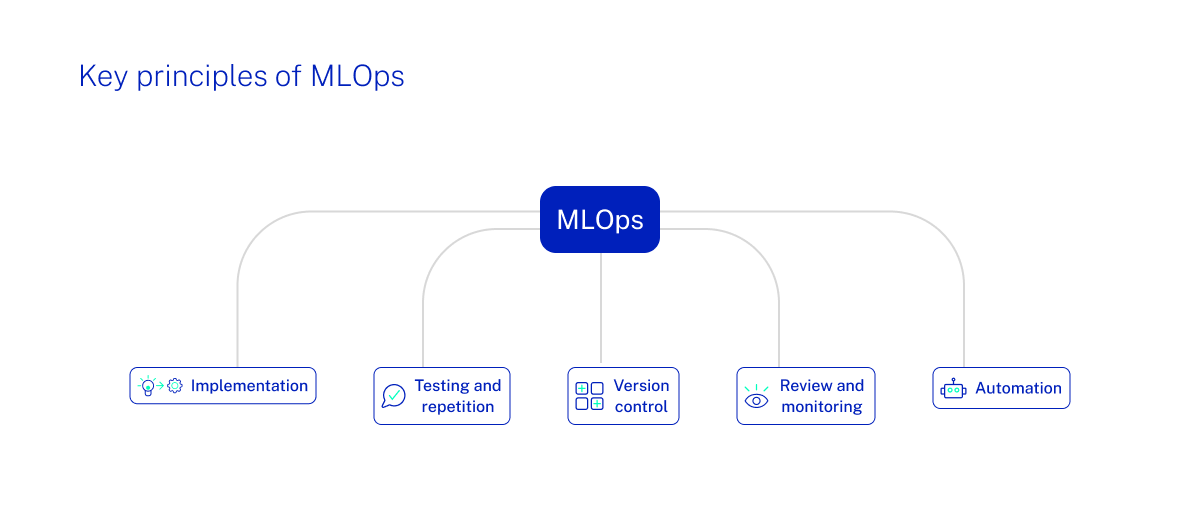

The five key principles of MLOps

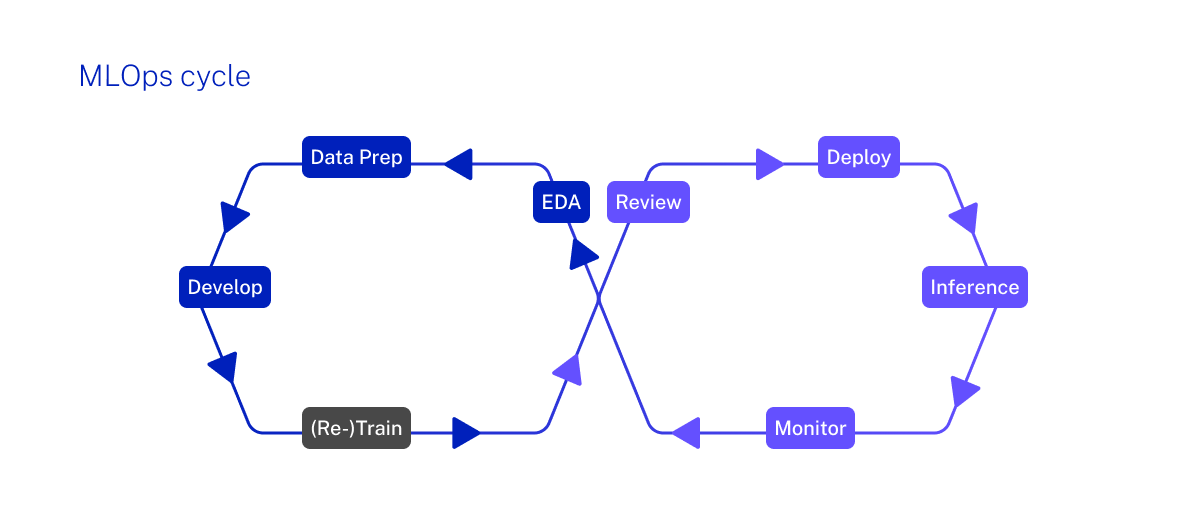

Deployed properly, MLOps feeds into every single part of the development pipeline. Whether it’s the data processing at the start of the cycle, the orchestration in the middle or the continuous training at the end that feeds back into future development, MLOps should be fully embedded into everything a DevOps team does.

Ciklum integrates cloud infrastructure with our own expertly crafted software components. We maximize the use of cloud platform capabilities, leverage the strength of the open-source community, and develop custom made components. These custom solutions effectively bridge the gap between standard MLOps processes provided by various platforms and the unique requirements of each project and client.

By leveraging the power and scalability of cloud infrastructure, combined with tailored logic and processes, MLOps facilitates a more dynamic and nuanced approach to unique challenges beyond traditional DevOps practices, enhancing the efficiency and effectiveness of machine learning workflows. This is most keenly felt in these five areas:

Implementation

MLOps encourages a much more collaborative and inclusive approach to development, between ML and engineering teams, and all other stakeholders involved in the process. They can come together to define core objectives and metrics around how ML is going to be used, in the context of wider engineering and operational targets. This is vital for creating an ML architecture and infrastructure that is not only reliable and secure, but is also fit for its stated purpose.

In Ciklum, we view the unique collaboration dynamics in MLOps as follows:

"The collaboration between teams and team members in this domain is similar to traditional software development, involving roles such as backend developers, DevOps engineers, DBAs, and cloud operations, among others. The key difference, however, lies in the centrality of DATA over UX, and this makes the collaboration to require more dependencies between team members. Some of these dependencies do not exist in traditional software development lifecycles. But they can be automated; and we call this 'MLOps'.

To explain, the primary objective of an AI development team is to manage data effectively, ensuring the AI agent (fundamentally a machine learning model) receives the necessary information for optimal performance. This shift represents a paradigm change, bringing distinct differences in team collaboration. These differences imply that teams are tasked not only with maintaining the app’s functionality but also with sustaining various new kinds of background processes, inherently introducing more complexity than traditional software development. Instead of relying primarily on user input, these teams derive most of their input from data sets, which may or may not be user-generated. This adds a layer of complexity, akin to managing an 'invisible' app alongside the primary application. The 'user' in most scenarios is not a human but another software application, which has different tolerance for errors than humans – in some cases, it is better, in others, it is even more complicated. AI models are not as predictable as traditional software, necessitating increased backup measures, more logic for versioning, and more effort in testing.

These factors amplify interdependencies among team members and demand better coordination. It’s in this setting that MLOps play a crucial role, streamlining operations and protecting processes against both machine and human errors."

Testing and repetition

Every good development process needs a comprehensive and multi-layered testing regime, rooting out any bugs that can cause failures, disruption or poor user experiences. ML development represents a paradigm shift, necessitating the adaptation of testing methods to cover a wider range of scenarios. These scenarios must be wisely and intelligently tailored to novel situations, ensuring comprehensive testing that is responsive to the unique challenges and dynamics of machine learning. This approach is essential for the robust and reliable development of ML applications.With regards to a machine learning model, it means conducting a thorough assessment to make sure that it’s producing the expected outcomes - and redeveloping and retesting until it does. At the same time, it’s essential to ensure that any anomalies won’t have an impact on other programs or processes.

Version control for Machine Learning Applications

Compared to traditional software development applications, ML applications exhibit a lower level of predictability, making their behavior less consistently foreseeable. This necessitates a more complex versioning logic, essential for enabling the restoration of models to any previous state and for identifying the causality of any unexpected behavior. This approach ensures that developers can effectively trace and address issues, maintaining the integrity and performance of the ML applications. Ensuring that all new software releases and applications have a clearly-defined and regular schedule of versioning is critical. This process becomes much easier if machine learning models can be relied upon to consistently reproduce results. By simply changing certain variables and measuring fluctuations in those results, teams can better understand and predict model behavior. Such a level of trackable experimentation is crucial, as it means that teams can easily plan out new versions and track changes over a long period of time.

Review and monitoring of Machine Learning Models

Building on the concept of version control, machine learning models can sometimes drift away from their original purpose, and get to a point where they aren’t serving intended business needs as well as they could or should be. This observation underscores the necessity for a constant monitoring approach. Only then can their reliability, availability, and performance be validated over time, through the tracking of metrics as diverse as throughput, error rates, and response time. MLOps practices ensure this approach to reviewing is deeply embedded into development, so that any drop-offs in performance are swiftly identified, and resolved through model retraining.

Automation

The more automation within the development process, the more capable an ML model can be. ML projects typically encompass more dependencies compared to traditional software projects, leading to an increase in the number of processes involved. Automating these processes can significantly reduce the risk of errors, as it streamlines complex workflows and enhances consistency in execution, thereby improving the overall reliability and efficiency of the ML project lifecycle. Automating data, model and code pipelines not only improves the quality of the training a model receives, but it can also speed it up, too. The ultimate goal will be for MLOps teams to fully automate ML model deployment into core software systems, without the need for any manual intervention at any part of the workflow.

What are the benefits of applying MLOps?

Taking a holistic and all-encompassing approach to MLOps can take development processes to the next level - which can make a real difference in a landscape where driving competitive advantage is crucial. In our experience, the organizations that are getting DevOps right are enjoying:

More focus on data and on behavior

More focus on data and on behavior

By incorporating MLOps practices, software and product development teams can achieve a smoother process with fewer interruptions and errors, allowing for a greater focus on the outcome. The inherent unpredictability of software components in AI projects nearly elevates the use of MLOps practices to an essential requirement, helping ensure that projects do not encounter unexpected delays. This is particularly crucial given the critical emphasis on data and model behavior in these projects.

Better testing and governance

Better testing and governance

Many organizations have struggled with scaling AI lifecycles, because it can be particularly difficult to validate with transparency. MLOps provides the perfect antidote to this problem, as it makes sure that engineers demonstrate how models were built and where they were deployed, thanks to automatic reporting and strict governance. Good MLOps practices will also give scope for auditing, explanations of decision-making, result measurement, compliance tracking, and an open forum for challenging models.

Wider automation for efficiency

Wider automation for efficiency

The large volumes of data involved in machine learning means doing many of the preparation and processing tasks manually can soak up a lot of valuable staff time. What’s more, these manual processes are prone to human error and can often fail to deliver the results that are expected. MLOps allows most - or ideally, all - of these time-consuming jobs to be automated, for faster lifecycles, more reliable data, and a more efficient approach to training, deployment and retraining alike.

Greater innovation

Greater innovation

Just like its ‘sister’ technology AI, machine learning is evolving and advancing at breakneck speed. New techniques and algorithms are coming on stream all the time, and it’s the organizations who can adopt them both quickly and reliably that are best-placed to gain competitive advantage. MLOps expedits the development and deployment of new models and techniques, making it far faster to improve model efficiency and accuracy.

Stronger organizational productivity

Stronger organizational productivity

According to Gartner, only half of all machine learning models actually reach production, meaning the process is still largely inefficient given the scale of time and resources that can be involved. MLOps can play an important part in improving the rate of success in many ways, but especially through streamlining and automation, freeing up data scientist and engineer time that can then be used to focus on tasks where human input is essential. This approach means that organizations get the best of human and automated effort in the places where each is best-suited, maximizing the chances of a productive, profitable deployment.

In summary

To make MLOps work, it takes far more than just the right machine learning tools. Those tools need to be deployed in the right places and used in the right ways, in order to generate the biggest efficiency and innovation benefits and maximize the investment. And that’s where the expertise of Ciklum comes in.

We have over a decade of experience in deploying MLOps into businesses big and small, across a range of different industries. Our custom machine learning model creation means we can help you access the latest technologies and practices, and benefit from our deep, cross-domain expertise. Whether your development focuses on the cloud, on-premise, embedded or mobile optimization, we can work closely with you to understand your objectives and craft tailored solutions that deliver in the context of your business.

Find out more on our MLOps philosophy here, or get in touch with our team to discuss your specifics.

Blogs